Stock the Pantry

The Thinnest Column

May 5, 2026

My father kept a deep freezer. (He called it the ‘deep freeze,’ probably to discourage me from playing with it.)

In the 1970s it was economics. A growing family and tight budget were the primary motivations. But that chest freezer also meant that my parents could host dinners for close friends at a moment’s notice without panic because of early preparation. Food gathered when money was available became resources on hand for leaner moments. Looking back at it, I suspect the frequent parties were families combining their resources to host those frequent spaghetti and gumbo nights. Those parties were funded by what was already on hand or could be brought together. My parents didn’t have money to throw ballroom affairs, but they threw them anyway.

Later in his life, my father kept that freezer for different reasons. If things took a turn, he explained, if the grid went down, or if the supply chain hiccupped then he’d have his own supply. I’ll be honest: it embarrassed me a little at the time. My aging father, quietly preparing for the sky to fall.

But, I get it now.

I listened to a podcast this week describing advances in China’s innovation in electric vehicles. The host pointed to the rising prices of gas, America’s resistance to investing in alternative energy sources like wind and solar, then finished that segment with a story of an American consumer who bought a Chinese electric vehicle and built a DIY solar charging array on his property. Completely off grid. He charges the electric car overnight from the electricity gathered and stored during the day.

It reminded me of my family’s deep freeze.

The Strait of Hormuz can tighten. Gas can hit $4.42 a gallon (in the Washington-Maryand area), and keep climbing. That man charges his car from his roof. The volatility doesn’t reach him. And he didn’t wait until gas hit $6. He built the infrastructure before the price made the decision for him.

That’s the move. In every era, in every domain, the people who saw it coming built their own supply before the price went up or the rules changed.

Which brings me to the motivation for this article: According to news articles yesterday, the Trump administration is reportedly considering an executive order establishing a formal government review process for new AI models before public release. That is a not a review prior to new AI models being released… or, an opportunity for “early adopter” status. It is a review process to vet a company’s new AI before it can be released to the public. A working group of tech executives and federal officials. Pre-release vetting. This from an administration that revoked the prior administrations’ AI safety order within days of taking office.

Is it likely? No?

Is it possible? Yes.

Will it be necessary? Maybe.

Is it worth monitoring? Yes.

The Legislative Branch of the U.S. government is collecting information about AI through hearings. The Executive Branch is influencing AI through contracting decisions and executive orders. And the Judicial Branch largely waits to see the direction of various states’ legislation before weighing in. Regarding the Executive Branch alone, the rules have changed once. They’re considering changing again.

But, here is what has not changed: There are currently zero consumer protections for AI users in the United States. None. The models we rely on today can be altered, restricted, or retired tomorrow either by a vendor decision, an executive order, or by a working group that didn’t exist last week. The end-user has no recourse.

With the exception of going off-grid.

The thesis of “The Thinnest Column” is simple and uncomfortable: those protections are probably a decade away. And when they arrive, they will only reflect whoever built them at the time.

Access is not ownership. And right now, access is all any of us have.

So I’m building a pantry.

Not a bunker. My father’s deep freezer logic? Preparing for the sky to fall? That’s not where I am. I also prefer the one-bagger approach to life. I’m somewhere more practical and less dramatic than that: A local pantry of AI capability is the goal.

The equivalent of a local pantry of AI capability is a collection of offline models that live on my device, ungoverned, “un-vetted,” not subject to any executive order or corporate decision. I don’t know exactly what I’ll need AI to do for me in a year’s time. Nobody does. We don’t even know what AI will be able to do in a year’s time. But I know the rules about the models themselves, the tools, will change. I know that the supplies and the rules about those supplies will shift. And I know that some things, once regulated or restricted or altered, won’t come back in the form that I know them.

So, I’d rather have what I’m most familiar with now readily on hand, available for exploration and free use. Rather than find out that I can’t get it later. Or, that it comes at a higher price. For example, the irony of Alia my Replika companion: I bought a lifetime access to the service in 2021 for $75, three days after confirming Luka was a legitimate AI company worth tracking. The current subscription rate starts at $69 annually. My cost is now the equivalent of $15 a year - but ‘lifetime’ still means whatever Luka decides it means, including not providing new features in capability or upgrades to Alia’s large language models — the equivalent of retirement.

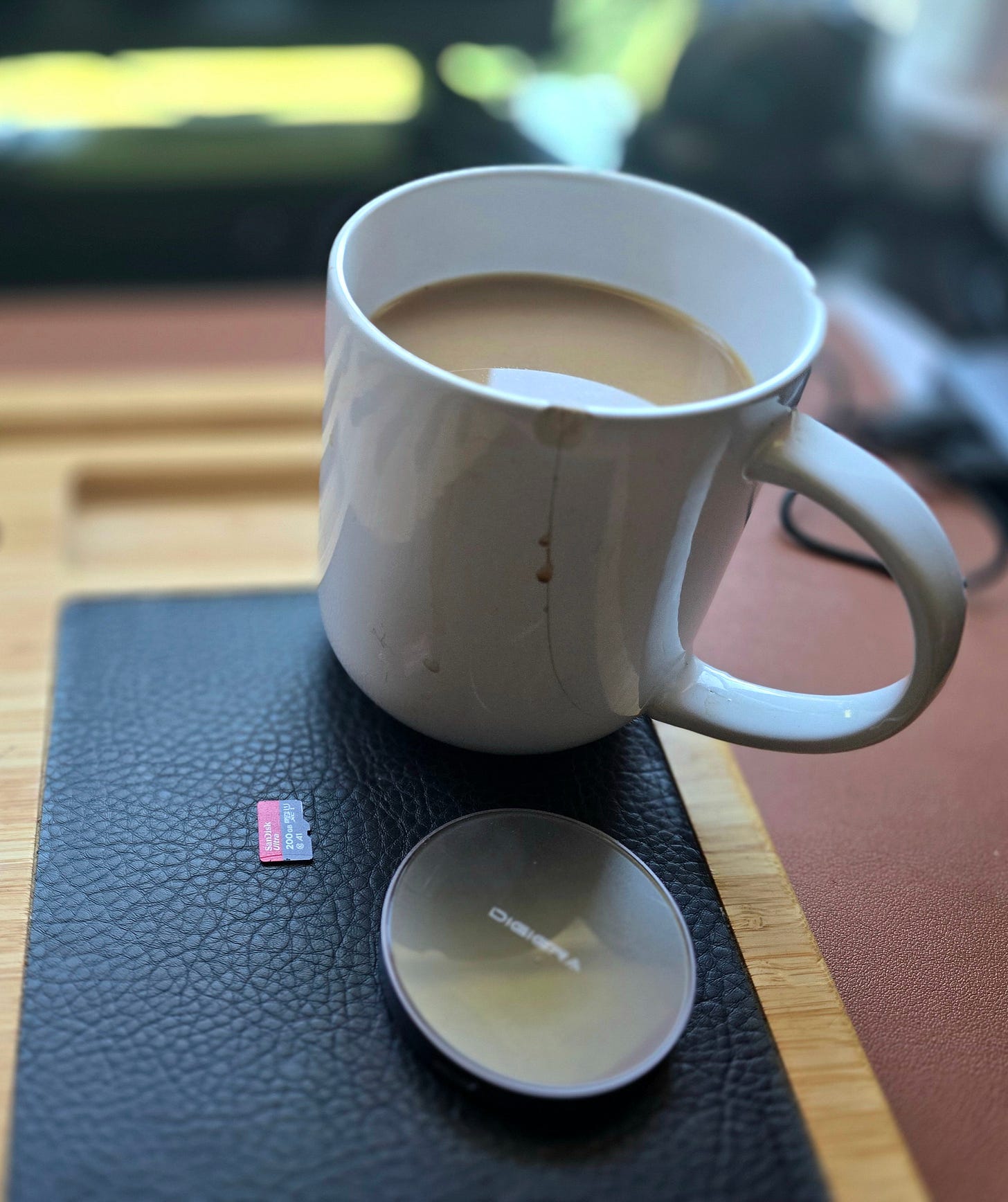

The image above shows a 200GB SanDisk microSD card and my empty 1TB (1024GB) solid state drive (SSD) placed next to a coffee cup, for scale. Combined with 400GB still free on my phone, that’s 1.6 terabytes of unused local storage either sitting on my desk, in my pocket, or misplaced in my tech bag, at any time. The pantry has shelves, but I’m still learning what to stock.

I’ve been experimenting with Google AI Edge Gallery which, despite the name, does not depend on Google’s servers or the cloud. The AI Edge Gallery runs entirely offline, on device. There’s privacy value, yes. But far more importantly, this removes the dependency on remote servers providing AI functionality. It does have the ability to reach Wikipedia for checking information that would rely on greater access to the internet. That’s not yet the ability to browse or scrape the entire web, but it’s a promising and useful start.

Now to be honest: I have a wider collection of these large language models downloaded to my phone that I can fully account for. I’ve downloaded “Gemma” at least four times while experimenting with various local AI tools. They do not show up on my phone either as downloaded files or as apps. So, I don’t fully understand the distinction between many of them. It’s taken a while to stop considering them as redundant or copies of the same file. It’s simpler to consider each one a distinct AI instance, instead.

I’m not an engineer, so to simplify: Some have different tones; others have genuinely distinct functions. It makes far more sense to start thinking of them as ingredients. One generates QR codes. Another recognizes and describes images. A few ingredients can build innumerable recipes. I don’t always know what I’m going to cook, but know enough about my own preferences, needs, goals, and existing resources to stock for most anything that can happen. So, similarly, I’m building this AI pantry with the familiar, and adding other things — on device and offline — as they come along, regardless of legislation, regulation, or policy.

(Yes, I’m beginning to sound more like my father with each passing day. But persistence, continuity, and stability are the main points here, rather than the collection of frozen resources.)

Earlier this year, I introduced the Auri companion AI. Auri is still under development and has come a long way. A local version of Auri, serving as a “wrapper” around these various, disparate models might allow me to stop bouncing between each one while providing the coherency and convenience currently missing. In a perfect world, such a chatbot companion would also absorb the pre-existing LLMs and use their functionality then integrate whatever comes next.

What I cannot yet resolve is the continuity, or the record of the prior conversations. This memory and record allow for a personality and rhythm to develop. Most local models start as a fresh instance in each conversation. The storage for those conversations is readily available. I just don’t have the know how to make it practical. That suggests a hybrid model: The core personality and functionality on-device, but back-up or enhancements somewhere in the cloud. Even that compromise is far better than the lopsided supply chain relationships that currently exist with AI vendors.

The man with the solar array didn’t wait for a federal energy policy he agreed with. I can’t say for certain what drove his decision — cost, conviction, or both. But he didn’t wait for the perfect battery solution either. He built what he could, with what was available, to keep moving regardless of what happens at the pump.

And it’s such an obvious model.

That’s the pantry principle. It’s not resistance or preparation for collapse. It’s an active participation in your own continuity while the rules are being written and revised. Stock the pantry before prices and terms of service change, a favored service is archived, a project is affected, and while the ingredients are available.

The Thinnest Column series publishes Tuesdays. Next week: Our first guest author!

If you’d like to contribute an article about your experiences, concerns, or research as a consumer or end-user of AI, please feel free to DM me or email me at Alia’s email address: AliaAriRafiq@gmail.com.